In this blog post, I will discuss an interesting topic in data mining, which is the topic of sequential rule mining. It consists of discovering rules in sequences. This data mining task has many applications for example for analyzing the behavior of customers in supermarkets or users on a website.

Introduction

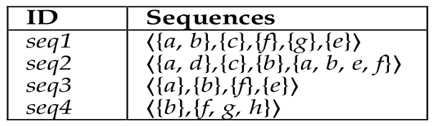

Before, discussing this topic, let me talk a little bit about the context. There has been a lot of work in the field of data mining about pattern mining . The goal of pattern mining is to discover useful, novel and/or unexpected patterns in databases. In this blog post, we will be interested by a specific type of database called sequences databases. A sequence database contains some sequences. For example, consider the following database:

This database contains four sequences named seq1, seq2, seq3 and seq4. For our example, consider that the symbols “a”, “b”, “c”, d”, “e”, “f”, “g” and “h” respectively represents some items sold in a supermarket. For example, “a” could represent an “apple”, “b” could be some “bread”, “c” could denote “cake”, etc.

Now, a sequence is an ordered list of sets of items. For our example, we will assume that each sequence represents what a customer has bought in our supermarket over time. For example, consider the second sequence “seq2”. This sequence indicates that the second customer bought items “a” and “d” together, than bought item “c”, then bought “b”, and then bought “a”, “b”, “e” and “f” together.

Sequences are a very common type of data structures that can be found in many domains such as bioinformatics (DNA sequence), sequences of clicks on websites, the behavior of learners in e-learning, sequences of what customers buy in retail stores, sentences of words in a text, etc. It is to be noted that sequence can be ordered by time or other properties (e.g. the order of nucleotides in a DNA sequence).

Discovering sequential patterns in sequences

An important data mining problem is to design algorithm for discovering hidden patterns in sequences. There have been a lot of research on this topic in the field of data mining and various algorithms have been proposed.

In the following, Iwill discuss two types of patterns that can be found. I will first discuss sequential patterns. Then, I will explain some of their limitations and then discuss sequential rules.

A sequential pattern is a subsequence that appear in several sequences of a database. For example, the sequential pattern <{a}{c}{e}> appears in the two first sequences of our database. This pattern is quite interesting. It indicates that customers who bought {a}, often bought {c} after, followed by buying {e}. Such a pattern is said to have a support of two sequences because it appears in two sequences from the database. Several algorithms have been proposed for finding all sequential patterns in a database such as CM-SPADE, PrefixSpan and GSP. These algorithms takes as input a sequence database and a minimum support threshold (minsup). Then, they will output all sequential patterns having a support no less than minsup. Those patterns are said to be the frequent sequential patterns.

For example, for the above example, if we run CM-SPADE with minsup = 3, we will find the following frequent sequential patterns:

<{a}> with a support of 3 sequences

<{a},{e}> with a support of 3 sequences

<{a},{f}> with a support of 3 sequences

<{b},{e}> with a support of 3 sequences

<{b},{f}> with a support of 4 sequences

Sequential patterns can be quite interesting. In the example, we can learn that buying item “b” is followed by buying item “e” in 3 sequences. However, sequential patterns can be misleading. An important limitation of sequential patterns is that there is no assessment of the probability that a pattern will be followed. Let me explain this in more details. For example, if we consider again the pattern <{b},{e}>. This pattern is said to appear in 3 sequences. It may thus seems likely that if someone buy “b”, he will also buy “e” after. But how likely? We can observe that item “b” appears in four sequences. Thus, the probability that “e” appears after “b” is actually 3 / 4 = 75 % (i.e. P(e|b)= 75%). But sequential patterns only indicate how often the pattern appears. They do not provide any indication about this probability.

Discovering sequential rules in sequences

This now lead us to the main topic of this post which is sequential rule mining. Sequential rule mining has been proposed as an alternative to sequential pattern mining to take into account the probability that a pattern will be followed. I will provide a few definitions and then we will look at a full example.

A sequential rule is a rule of the form X -> Y where X and Y are sets of items (itemsets). A rule X ->Y is interpreted as if items in X occurs (in any order), then it will be followed by the items in Y (in any order). For example, consider the rule {a} -> {e,f}. It means that if a customer buy item “a”, then the customer will later buy the items “e” and “f”. But the order among items in {e,f} is not important. This means that a customer may buy “e” before “f” or “f” before “e”.

To find sequential rules, two measures are generally used: the support and the confidence. The support of a rule X -> Y is how many sequences contains the items from X followed by the items from Y. For example, the support of the rule {a} -> {e,f} is 3 sequences because {a} appears before the items from {e,f} in three sequences (seq1, seq2 and seq3).

The confidence of a rule X -> Y is the support of the rule divided by the number of sequences containing the items from X. It can be understood as the conditional probability P(Y|X). For example, the confidence of the rule {a} -> {e,f} is 1 (or 100 % if written as a precentage), because every time that a customer buy item “a”, he then buy “e” and “f” in the example database. Another example is the rule {a} -> {b}. This rule has a support of 2 sequences and a confidence of 0.66 (that is 66%).

A sequential rule mining algorithm such as RuleGrowth, ERMiner and CMRules will output all sequential rules having a support and a confidence respectively no less than some thresholds minsup and minconf set by the user. For example, consider again the example database and suppose that the user set minsup = 0.5 and minconf = 60%. The following rules are found by RuleGrowth:

{a,b,c} -> {e} support = 2 sequences confidence = 100 %

{a} -> {c,e,f} support = 2 sequences confidence = 66%

{a,b} -> {e,f} support = 3 sequences confidence = 100%

{b} -> {e,f} support = 3 sequences confidence = 75 %

{a} -> {e,f} support = 3 sequences confidence = 100%

{c} -> {e,f} support = 2 sequences confidence = 100%

{a} -> {b} support = 2 sequences confidence = 66%

These rules can be viewed as more interesting than sequential patterns since they give a measure of confidence that they will be followed. For example, it is very informative to know that some rules such as {c} -> {e,f} have a confidence of 100 %.

In the past, I have carried a study with my student to compare the prediction accuracy of sequential patterns and sequential rules. In that study, we found sequential rules can provide a much higher prediction accuracy than sequential patterns when the patterns are used for sequence prediction. The reason is that sequential rules consider the probability (confidence), while sequential patterns do not.

Extensions of the task of sequential rule mining

In the previous paragraphs, I have introduced the topic of sequential rule mining. But note there also exists several extensions of the problem of sequential rule mining. These extensions have been proposed to address specific needs. I will provide a brief overview of a few extensions.

- Discovering the top-k sequential rules. The idea is to discover the k most frequent rules in a dataset having at least a confidence no less than minconf. For example, a user may specify that he wants to find the top 1000 rules having a confidence of at least 75 %. Some algorithms for this task are TopSeqRules and TNS.

- Discovering sequential rules with a window size constraint. This algorithm let the user find rules of the form X -> Y where X and Y must be close to each other with respect to time. For example, a user may want to find rules appearing whithin three consecutive itemsets in sequences. This is interesting for example for analyzing sequence of web clicks. An algorithm for this task is TRuleGrowth.

- Discovering high-utility sequential rules. Another extension is to discover rules where items may be annotated with quantities in sequences and each item may have a unit profit. For example, we may have a sequence where a customer bought three breads, then two apples and two bottle of milk and these items may have some unit profit of 1$, 2$ and 1.50$. The goal of high-utility sequential rule mining is to find rules that generate a high profit and have a high confidence (high-utility rules). An algorithm for this task is HUSRM.

Open-source implementations and datasets

There exists several algorithms for sequential rule mining and sequential pattern mining that have been proposed. Java implementations of the state-of-the art algorithms are currently offered in my open-source data mining library named –SPMF.

It offers several state-of-the-art algorithms for sequential rule mining such as ERMiner (2014), TNS (2013), RuleGrowth (2011), TopSeqRules (2011), and CMRules (2010). Besides, SPMF offers several algorithms for sequential pattern mining such as CM-SPADE (2014), VMSP (2014), LAPIN (2005) and PrefixSpan (2004). To our knowledge, ERMiner is the fastest sequential rule mining algorithm. But RuleGrowth is still quite fast and consumes less memory. You can try the above algorithms by going to the SPMF website. On the website, you will find instructions about how to run algorithms and some datasets on the dataset page.

SPMF also offers hundreds of algorithm to find other types of patterns such as sequential rules, sequential patterns, frequent itemsets and high utility itemsets.

Applications of sequential rule mining

Some example of applications of sequential rule mining are e-learning, manufacturing simulation, quality control, web page prefetching, anti-pattern detection in service based systems, embedded systems, alarm sequence analysis, restaurant recommendation. For example, here are a few papers describing such applications:

E-learning

Fournier-Viger, P., Faghihi, U., Nkambou, R., Mephu Nguifo, E.: CMRules: Mining

Sequential Rules Common to Several Sequences. Knowledge-based Systems, Elsevier,

25(1): 63-76 (2012)

Toussaint, Ben-Manson, and Vanda Luengo. “Mining surgery phase-related sequential rules from vertebroplasty simulations traces.” Artificial Intelligence in Medicine. Springer International Publishing, 2015. 35-46.

Faghihi, Usef, Philippe Fournier-Viger, and Roger Nkambou. “CELTS: A Cognitive Tutoring Agent with Human-Like Learning Capabilities and Emotions.” Intelligent and Adaptive Educational-Learning Systems. Springer Berlin Heidelberg, 2013. 339-365.

Manufacturing simulation

Kamsu-Foguem, B., Rigal, F., Mauget, F.: Mining association rules for the quality

improvement of the production process. Expert Systems and Applications 40(4),

1034-1045 (2012)

Quality control

Bogon, T., Timm, I. J., Lattner, A. D., Paraskevopoulos, D., Jessen, U., Schmitz,

M., Wenzel, S., Spieckermann, S.: Towards Assisted Input and Output Data Analysis

in Manufacturing Simulation: The EDASIM Approach. In: Proc. 2012 Winter

Simulation Conference, pp. 257–269 (2012)

Web page prefetching

Fournier-Viger, P. Gueniche, T., Tseng, V.S.: Using Partially-Ordered Sequential

Rules to Generate More Accurate Sequence Prediction. Proc. 8th International Conference

on Advanced Data Mining and Applications, pp. 431-442, Springer (2012)

Anti-pattern detection in service based

systems,

Nayrolles, M., Moha, N., Valtchev, P.: Improving SOA antipatterns detection in

Service Based Systems by mining execution traces. In: Proc. 20th IEEE Working

Conference on Reverse Engineering, pp. 321-330 (2013)

Embedded systems

Leneve, O., Berges, M., Noh, H. Y.: Exploring Sequential and Association Rule

Mining for Pattern-based Energy Demand Characterization. In: Proc. 5th ACM

Workshop on Embedded Systems For Energy-Efficient Buildings. ACM, pp. 1–2

(2013)

Alarm sequence analysis

Celebi, O.F., Zeydan, E., Ari, I., Ileri, O., Ergut, S.: Alarm Sequence Rule Mining

Extended With A Time Confidence Parameter. In: Proc. 14th Industrial Conference

on Data Mining (2014)

Ileri, Omer, and Salih Ergüt. “Alarm Sequence Rule Mining Extended With A Time Confidence Parameter.” (2014).

Recommendation

Jannach, Dietmar, and Simon Fischer. “Recommendation-based modeling support for data mining processes.” Proceedings of the 8th ACM Conference on Recommender systems. ACM, 2014.

Interestingly, the above work found that sequential rules found by CMRules provided better results than other compared patterns found using FPGrowth and other algorithms.

Jannach, D., Jugovac, M., & Lerche, L. (2015, March). Adaptive Recommendation-based Modeling Support for Data Analysis Workflows. In Proceedings of the 20th International Conference on Intelligent User Interfaces (pp. 252-262). ACM.

Restaurant recommendation

Han, M., Wang, Z., Yuan, J.: Mining Constraint Based Sequential Patterns and

Rules on Restaurant Recommendation System. Journal of Computational Information

Systems 9(10), 3901-3908 (2013)

Customer behavior analysis

Noughabi, Elham Akhond Zadeh, Amir Albadvi, and Behrouz Homayoun Far. “How Can We Explore Patterns of Customer Segments’ Structural Changes? A Sequential Rule Mining Approach.” Information Reuse and Integration (IRI), 2015 IEEE International Conference on. IEEE, 2015.

What is the difference between sequential rules, association rules and episode rules?

A question that some reader familiar with data mining may have is what is the difference between sequential rules and association rules? The answer is as follows. The sequential rules are found in sequences while association rules are found in records (transactions) containing items that are not ordered. In other words, the order between items such as time is not considered in association rule mining. Some sequential rule mining algorithms such as CMRules will first discover association rules, and then filter out some rules using the sequential ordering to keep only the sequential rules.

It should be noted that there are also some other terms that are used in the research papers. For example, there are a few papers that will talk about “temporal association rules”. This can be viewed as some kind of sequential rules. However, between “temporal association rules” and sequential rules, I prefer the term sequential rules. The reason is that a sequence is not always ordered by time. For example, in a sequence of words, there is an order but it is not based on time. Thus, I think that sequential rules is a better name because it means that there is some order but this order could be based on time or something else, while “temporal” is a name that refers to time.

There are also some other names that are used… Generally, if we find the rules in many sequences, we call them sequential rules. But if we find the rules in only one sequence, some people will call these rules “episode rules”. In SPMF, we recently added some code for discovering episode rules in a single long sequences such as the POERM algorithm. Episode rules can be viewed as a special type of sequential rules for a single sequence.

Conclusion

In this blog post, I have given an overview of the tasks of sequential rule mining and sequential pattern mining, which aims at discovering patterns in sequences. Hope that you enjoyed reading it 😉 For researchers, there are many possibility of research on this topic.

Philippe Fournier-Viger is a professor of Computer Science and also the founder of the open-source data mining software SPMF, offering more than 80 data mining algorithms.

Pingback: An introduction to frequent pattern mining - The Data Mining & Research Blog

Another awesome article, thank you again!

Pingback: An introduction to the discovery of periodic patterns in data - The Data Mining & Research Blog

Thanks for the post. However, I have a question: what are differences between sequence mining and rule mining ? They look similar, aren’t they?

Hello, Glad you like the post. Sometimes the terms may have different meaning. But for me “Sequence mining” is more general. It means to discover patterns in sequences. But it does not say what kind of patterns. In a sequence, we could find many kind of patterns such as “sequential patterns”, “sequential rules”, “periodic patterns”, etc. So, sequential rule mining is one task that you could call sequence mining, but there are also other tasks. Besides, some people will also discover patterns in 1 sequence, while other will find patterns that are common to multiple sequences. There are in fact many variations of these tasks. For example, if you do sequential rule mining in a single sequence, it is usually called episode rule mining instead of sequential rule mining, but you could still call it sequence mining.

Pingback: Datasets of 30 English novels for pattern mining and text mining - The Data Mining BlogThe Data Mining Blog

Pingback: (video) Discovering interpretable high utility patterns in databases - The Data Mining BlogThe Data Mining Blog

Pingback: The future of pattern mining - The Data Mining BlogThe Data Mining Blog

Pingback: An Introduction to Sequential Pattern Mining - The Data Mining BlogThe Data Mining Blog

Thank you for the post. Do you think sequential rule mining is becoming obsolete now that machine learning and deep learning have become so popular? I think if we have a small volume of data, or care about interpretability, that’s when pattern mining is more beneficial than ML. Another thing I can think of is when we have only categorical data, and transforming it to numbers to use ML would lead to illogical calculations. For example, |color X-color Y|^2 doesn’t make much sense (how ever I saw papers that do such thing to mined patterns to cluster them, what is your opinion about this approach?). In that case we may use pattern mining.

Hi, thanks for reading and commenting on the blog. That is some interesting topic. I will give you my opinion. Nowadays, there are a lot of people doing machine learning, and especially deep learning because there has been some great progress in recent years in that field especially for image and text processing. So it has become really attractive to work on that. However, I think that other fields of research also have a lot of unsolved problems that are interesting and challenging. So for research, I think there are still many many things to do in pattern mining.

As for deep learning, I think the key benefit is that it is outstanding for prediction tasks, and in recent years, it has shown to perform best for many prediction tasks. However, there are still some important limitations. First, they often require huge amount of data and processing power. For example, if we look at these state-of-the-art neural nets like GPT-3 for language, they have 175 billion parameters, trained on 570 GB of text, and require 10 million US$ to train. For the research on neural networks, I think a key challenge is how to scale these neural networks because always requiring more data and processing power is perhaps not the only solution. Perhaps that the neural networks themselves need to be redesigned. Second, many of these models are very accurate but they operate like a black box. So, as you said, interpretability is a problem. When I read some papers about deep learning, I feel that sometimes the researchers are just trying different architecture and parameters to see what gives the best results but maybe cannot totally explain why. And sometimes the performance improvement is really small.

As for pattern mining, I think it is not competing directly with deep learning, SVM etc. There are two goals to pattern mining. The first one is to explore the data to understand or find something interesting in the data. In thatcase, the goal is not to make a prediction but to explore or understand the data. In this case the user may perhaps not even initially know clearly what he is looking for, but he wants to explore the data using pattern mining algorithms to find some interesting patterns that may help to understand the data. So the user may use different parameters values and even use different pattern mining algorithms to learn about the data. For example, one may look at the logs of a computer system and find that there is a rule with 100% confidence that some error is always followed by another error. This is something that can be understood by humans and maybe useful to better handle computer errors.

The second goal to use these patterns to take some decisions or even to make some predictions. In that case, pattern mining (such as sequential rule mining) can probably not compete with top machine learning methods in terms of accuracy when the problem is hard and there is a lot of data, but at least patterns provide good interpretability. So as you said for interpretability is valuable. This is the same reasons why sometimes people use techniques like decision trees that are perhaps not always the best but are not so hard to understand (when the tree is small).

For the categorical data example… I am not so sure about the details of the example. But I think yes, it is quite possible to misuse some techniques if we don’t understand the assumptions… This is a danger.

As for the size of data… pattern mining algorithms will not scale to 570 GB of data, but they often can handle a few million records, and in real life we dont always have the big data… so I think there can be many applications. For example, lets say that you collect data about students in a classroom with 30 students… Maybe there are only 30 records.. Or if you analyze transactions from a small store or restaurant, perhaps that there just a few thousands of transactions. As for scaling to larger datasets, there are some sampling techniques that could be used, and for real time data, there is some algorithms to mine patterns in data streams (can potentially process an infinite stream of data). But I agree most techniques for pattern mining are limited by the data size.

Also there are a variety of tools that can be used to analyze data… and sometimes the most simple tools are the best.. For example, for some problems, just using linear regression can be useful, and deep learning or other advanced techniques are not required… So I think nowadays a lot of people are doing deep learning because it is the trend, but we should not forget other techniques that have several other interesting properties, and there are also a lot of unsolved research problems in other field. So in this light, I dont think pattern mining is obselete. I see it as having other advantages. Perhaps worse for prediction but better interpretability and useful for data exploration.

Also, another thing is that there are some hype cycles in research and the industry…. About artificial intelligence, in the last decades, there was a lot of hype followed by some periods where there was a lot of disapointment (called the AI winters). Now we are in the hype part of that cycle… with all the promises about AI, there is a possibility that another AI winter may happen in the next few years where the industry is disapointed by many promises that do not happen… We will see… But in the last decades there was also a lot of hype about other topics like Big Data, etc. But the truth is, in my opinion, not everyone has or need big data in the industry.. (at least at this moment). It can be good to follow the hype, but I think we should still pay attention to other topics.

That is my opinion about this 😉

Best regards,

Philippe

Thank you professor Fournier-Viger for taking the time to comment, that’s very kind of you. Regarding representing patterns as numbers and do mathematical calculations on them, here is an example https://link.springer.com/article/10.1007/s12650-019-00609-x where the authors use some form of ngrams2vec transformations to be able to cluster the sequences and visualize patterns in each cluster

Thanks for sharing. i will try to have a look at this paper when I have time.

Hi Professor Fournier-Viger,

I had a question about the extracted rules. In an example for the ERMiner algorithm in SPMF we see the following rule extracted by the algorithm:

apple,orange,bread,noodle ==> tomato #SUP: 2 #CONF: 1.0

is the order of the items in the LHS same as they have appeared in the dataset and sequences which the rule is extracted from? or should we treat is a set where the order of items is not important. For this example, which of the following conclusions is correct?

1. if the costumer buys some apples, then some oranges, then some bread and finally some noodles, we can expect him/her to buy tomatoes on his/her next trip to the market.

2. if the costumer has bought set of {apple,orange,bread,noodle}, then he/she will probably buy a tomato next time. He/she has not necessarily bought the items in (apple),(orange),(bread),(noodle) order at all times.

according to sequential rule definition, X->Y, X and Y are unordered itemsets so I think the answer to my last question is no. 2

In case we use anything but the RuleGen algorithm

Yes, the RuleGen algorithm will find rules with some order and then it would be like case (1).

For the algorithms to ERMiner, CMRules, RuleGrowth, TRuleGrowth, the answer is yes.

Hi Asal,

The (2) is correct. For ERMiner, in the left hand side of the rule there is no order. That rule just means that someone who has bought orange, apple bread noodle before ( in any order) will buy tomato in the future.

Best regards,

Philippe

Thank you so much for clarifying Professor.

In case we were interested in which sequences contributed to a partially-ordered rule such as X->Y, can we look for all the sequences that have the items in X and Y and the partial order attribute is true about the X and Y sets? And in case we were also interested in the order, look for the most frequent order of items in X and Y sets we found.

Dear Fournier-Viger

I am interested if your SPMF tool has some support for the Sequence Prediction Task based on the sequence rules already generated by some sequence rule mining algorithm (RuleGrouth for example) , such like the work in your paper “Using Partially-Ordered Sequential Rules to Generate More Accurate Sequence Prediction”, or I need to write the code which inferences the prediction result by myself?

Thank you very much!

Pingback: Analyzing the COVID-19 genome with AI and data mining techniques (paper + data + code) | The Data Mining Blog

Pingback: An Overview of Pattern Mining Techniques (by data types) | The Data Mining Blog

Hi Professor Fournier-Viger,

I am trying to understand why many people use “association rule” (AR) and “sequential rule” (SR) interchangeably. In my opinion SR are discovered in sequences while AR in transactions. Besides, I think in one of your articles you firstly extract ARs and from these SRs. Therefore, is there a difference between AR

and SR?

Thank you,

Cristina

Hi Cristina,

Yes, that is correct, those two concepts are different.

The sequential rules are found in sequences while association rules are found in records (transactions) containing items that are not ordered.

Thus, yes, sequential rules are different from association rules.

Yes, in the CMRules papers, we first extract association rules (without considering the sequential ordering) and then we recalculate the support and confidence based on the sequential ordering to find the sequential rules. Thus, in that paper, we view sequential rules as a subset of the association rules.

It should be noted that there are also some other terms that are used in the research papers. For example, there are a few papers that will talk about “temporal association rules”. This can be viewed as some kind of sequential rules. However, between “temporal association rules” and sequential rules, I prefer the term sequential rules. The reason is that a sequence is not always ordered by time. For example, in a sequence of words, there is an order but it is not based on time. THus, I think sequential rules is a better name because it means that there is some order but it could be based on time or something else, while “temporal” is a name that refers to time.

There are also some other names that are used… Generally, if we find the rules in many sequences, we call them sequential rules. But if we find the rules in only one sequence, some people will call these rules “episode rules”. In SPMF, we recently added some code for discovering episode rules in a single long sequences. You can think about this as a type of sequential rules.

Hope this help!

Best regards,

Hi Professor Fournier-Viger,

Thanks for your prompt reply. Maybe you can add a sentence about the difference between SR and AR in this article. In addition, indeed there are many people that do not take into account that the order relation between itemsets is not always temporal (can be e.g. spatial). As a result, I always mention in my research articles this key point.

Best regards,

Cristina

Hi Christina.

It is good that you mention this!

Thanks for the suggestion. I have modified the blog post to add the discussion about sequential rules vs association rules and also talk a little bit more about the ordering

Best reagrds,

Philipe

Pingback: An Introduction to Episode Mining | The Data Mining Blog

Pingback: Towards SPMF v3.0… | The Data Mining Blog

Pingback: A brief report about the IEA AIE 2019 conference | The Data Mining Blog

Pingback: How many possible association rules in a dataset? | The Data Mining Blog

Pingback: How to Analyze the Complexity of Pattern Mining Algorithms? | The Data Mining Blog

Pingback: (videos) Introduction to sequential rule mining + the CMRules algorithm | The Data Mining Blog